Early Release · Open Source · Anthropic + OpenAI

One platform.

Five ways to work

with AI.

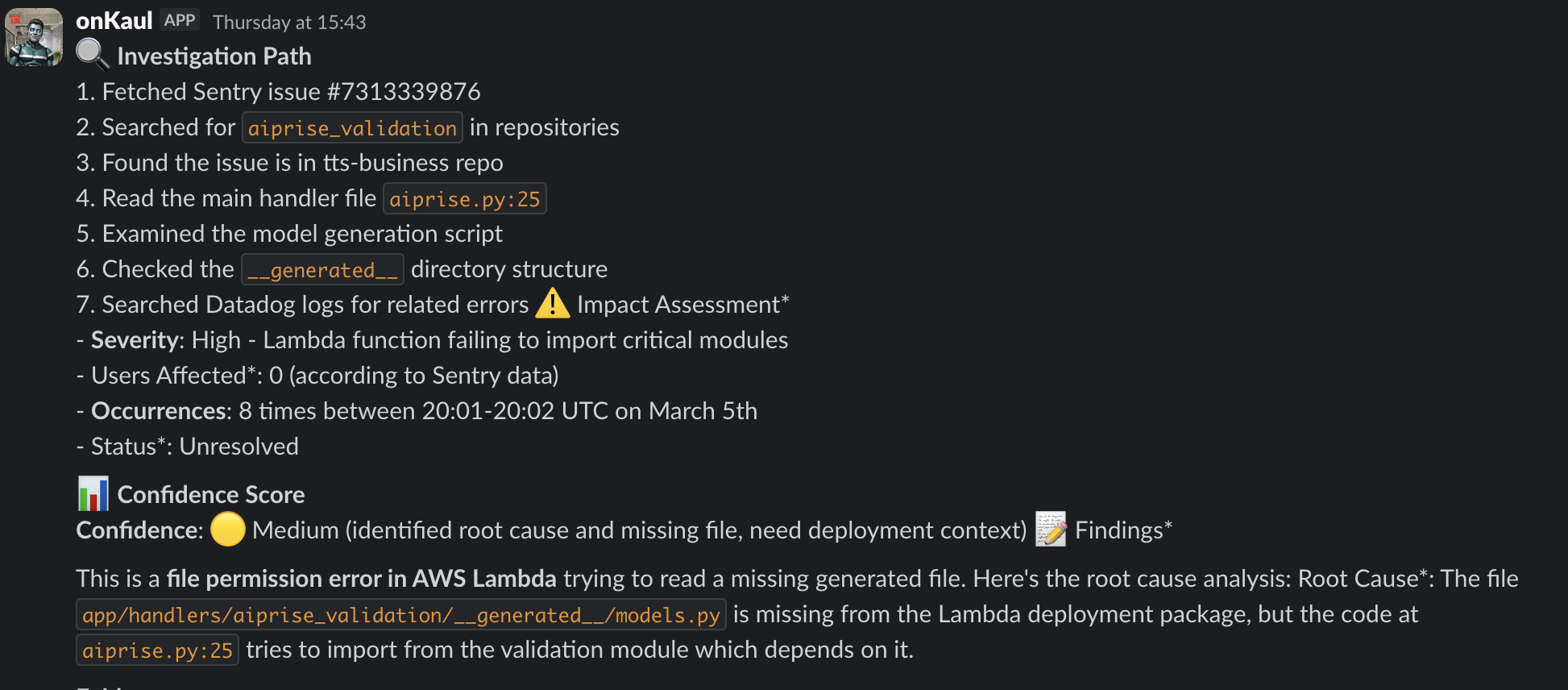

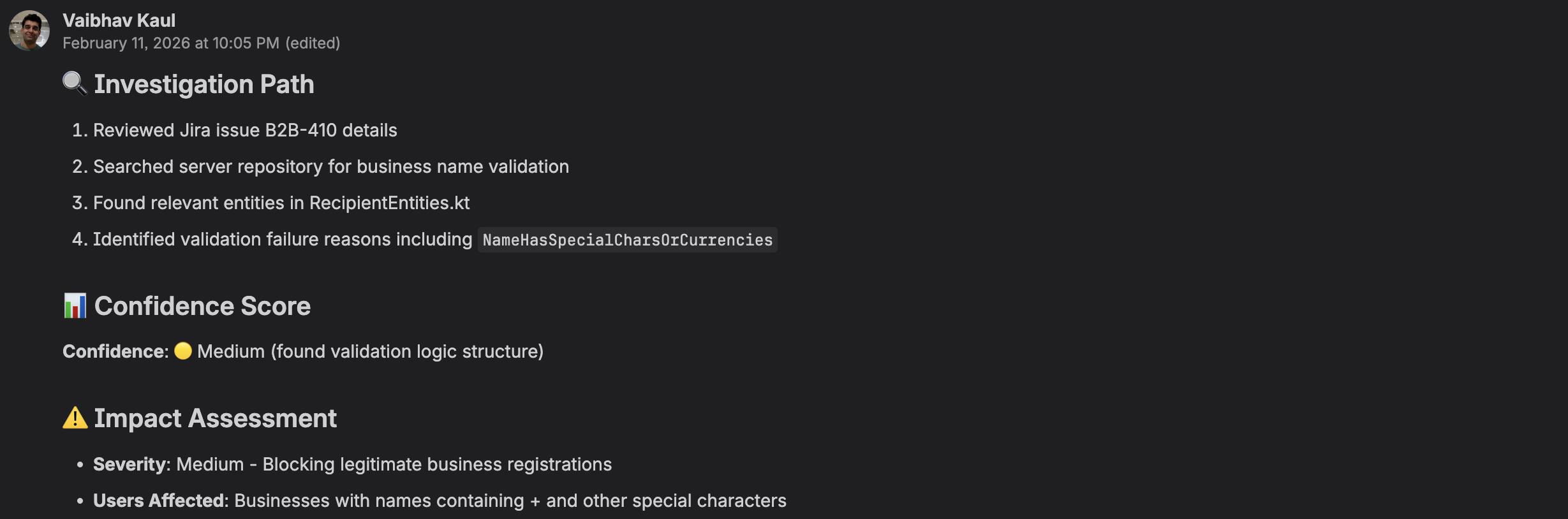

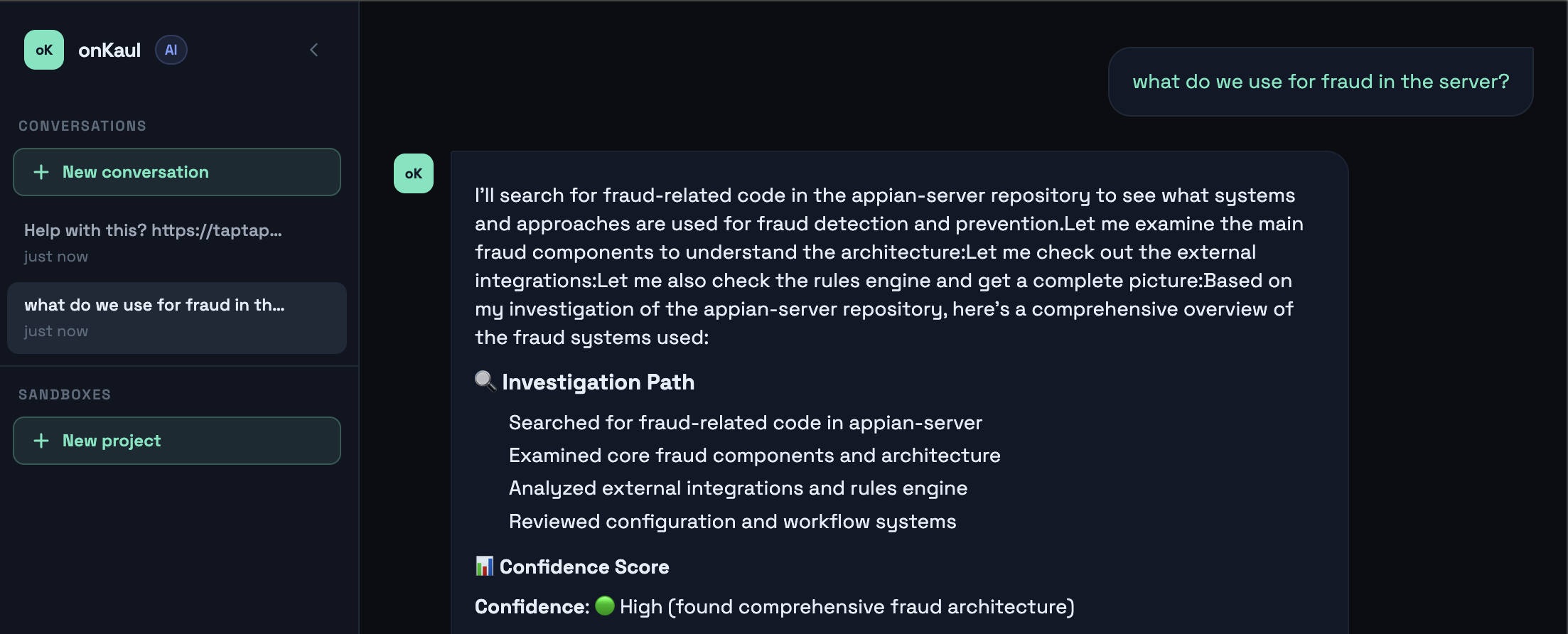

onKaul is an open-source AI developer platform with a pluggable intelligence core — investigate incidents in Slack, triage Jira tickets, build in the browser with an embedded coding agent, and pair-program with teammates in real time.

Connect Sentry, Datadog, GitHub, Jira, and Confluence once. Then ask from anywhere — a Slack thread, a Jira comment, the CLI, or the web UI. The bee-worker queue handles the rest asynchronously, so your team never waits for a response.

Slack Bot Jira Bot CLI Web Chat Sandbox

Slack, Jira, CLI, Web Chat, Sandbox — one platform for all of them

Sentry, Datadog, GitHub, Jira, Confluence, Brave Search, and more

Anthropic and OpenAI — switch with a single env var

4K down to Galaxy S24 — preview your builds on any screen

Scale horizontally — run as many bee workers as you need

Static HTML, Vite/React, or Fullstack (Vite + FastAPI)

Multiplayer sessions — guest joins live preview + shared terminal instantly

MIT licensed — self-host on Docker, EC2, or ECS. No vendor lock-in

Drag images and files straight into the sandbox — agent uses them immediately